Ceph Storage Alternative

What is Ceph?

Ceph is an open-source distributed storage system that provides unified block, file, and object storage interfaces. It is widely used in private cloud environments, especially in combination with OpenStack.

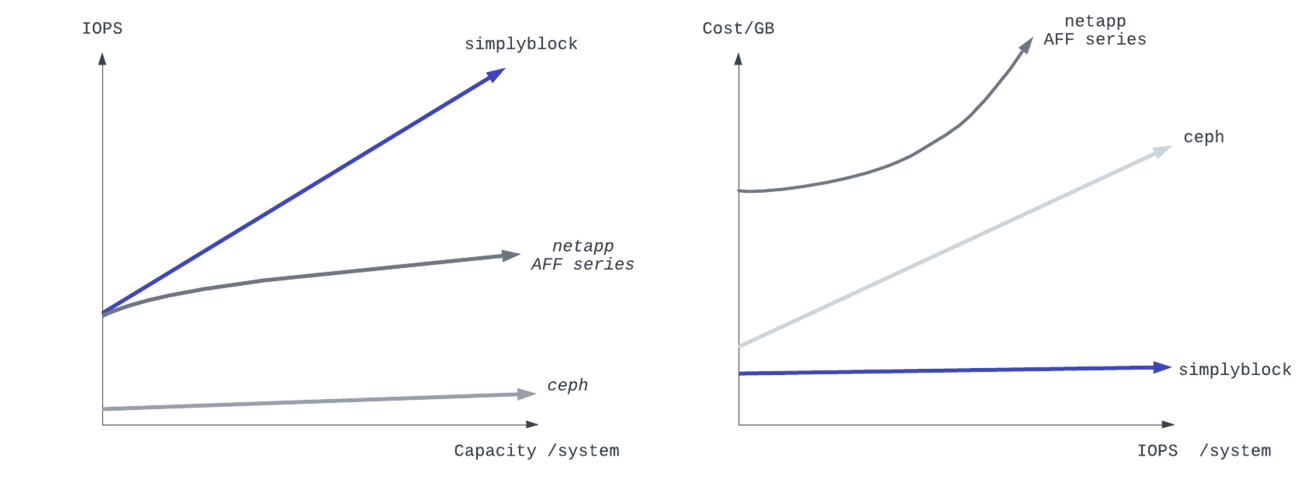

Hardware-Efficient High-Performance

More scalable and cost-efficient alternative to Ceph with very high IOPS. Up to 9x more effective storage and 100x more IOPS/GB than Ceph. Hybrid cloud storage is available for OpenStack, OpenNebula, or VMware. Powerful Kubernetes storage.

Simplyblock’s Kubernetes Storage platform delivers high-performance persistent storage tailored for Kubernetes environments.

Why simplyblock as an Alternative to Ceph?

What are the Downsides of Ceph?

Ceph was built and designed for spindling disks. This legacy architecture is hard to overcome. Despite its many advantages, Ceph suffers from inefficient use of CPU and disk resources and low IOPS/GB performance. Ceph’s maximum IOPS per CPU core is 4,000-8,000 IOPS, while simplyblock delivers up to 250,000 IOPS per CPU core (up to 50x more).

Simplyblock as an Alternative to Ceph

Simplyblock offers the best features of Ceph (great flexibility and reliability, superior integration with OpenStack, outstanding cluster architecture) upgraded by superior IOPS performance, storage efficiency, and access latency. Optimized for NVMe, based on NVMe®/TCP protocol. Hybrid cloud storage capability.

Due to a highly efficient implementation of distributed erasure coding and very CPU-efficient compression, simplyblock reaches a raw-to-effective storage ratio of 1:1.5, which is 4-5x better than for Ceph and other HCI based on replication. The average access latency for simplyblock is below 0.1 ms, which is about 30 times better than for Ceph.

The Simplyblock vs Ceph comparison outlines key differences in performance, scalability, and cost-efficiency.

Why simplyblock?

Hardware-efficient: Up to 5x more effective storage per GB than Ceph on the same raw storage

Highly Performant: Up to 50x more IOPS / CPU Core or GB. NVMe optimized storage.

Deep Integration: Full native integration for OpenStack cinder, Proxmox, OpenNebula, and VMware.

Complete Solution: File and object storage available in combination with the Redhat GFS2 filesystem and OpenStack Swift for S3

Ultra-low access latency and great scalability

Similarly to Ceph, the simplyblock solution is based on commodity server hardware and NVMe®/TCP. Still, rather than proprietary SAN technology, it provides very low access latency, high IOPS/GB and CPU core, great scalability, and flexibility. Simplyblock can be used as a hybrid cloud storage solution and deployed on any public or private cloud, on the edge, and bare metal.