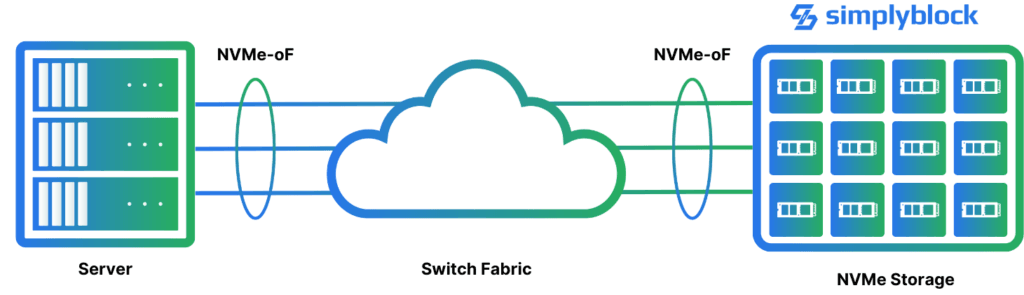

NVMe, a command set to talk to mainly flash storage devices, was designed as a high-performance SCSI replacement for the PCIe (PCI Express) bus. Later, it was expanded to support remotely attached flash storage pools with NVMe over Fabrics (NVMe-oF). While NVMe-oF supports additional transport layers, NVMe/TCP is the most powerful and widely used implementation to replace DAS (Direct-Attached Storage) and is the default modern protocol for software-defined storage such as simplyblock.

NVMe over TCP

NVMe/TCP is the most powerful and cost-effective way

to utilize NVMe over Fabrics (NVMe-oF).

Modern High-Performance Standard

for Network-Attached Storage

NVMe/TCP is the most powerful protocol of the NVMe-oF protocol family. It enables high performance and low overhead on commodity Ethernet TCP/IP-based networks, with high compatibility and lower entry cost than transport layers such as Infiniband or Fibre Channel. NVMe/TCP extends NVMe across network boundaries and data centers. NVMe/TCP requires no additional drivers and behaves like any locally attached NVMe device. For even greater optimization of NVMe-based architectures, NVMe over Fabrics with SPDK provides enhanced performance and efficiency, unlocking the full potential of fabric-attached flash storage with ultra-low latency and high throughput.

NVMe over TCP Storage with Local Storage Performance

Enables storage pools that scale to hundreds or thousands of storage devices and cluster nodes across your data center.

Ease of use since the necessary drivers are part of all major Linux distributions and Windows Server 2025 or later.

Consolidates storage requirements into a shared pool to maximize NVMe storage device utilization.

Provides disaggregated, hyper-converged, or hybrid storage pool models with the performance and latency of locally attached storage devices.

Parallelism with NVMe/TCP Multipathing

Enable seamless failover using simplyblock’s storage platform in case of a drive or node outage.

Scale-out storage performance with multiple TCP streams for higher parallelism and performance.

Get the best performance by fully utilizing multi-core servers and NVMe I/O queue parallelism.