The marriage of NVMe storage and Kubernetes persistent volumes represents a perfect union of high-performance storage and modern container orchestration. As organizations increasingly move performance-critical workloads to Kubernetes, understanding how to leverage NVMe technology becomes crucial for achieving optimal performance and efficiency.

The Evolution of Storage in Kubernetes

When Kubernetes was created over 10 years ago, its only purpose was to schedule and orchestrate stateless workloads. Since then, a lot has changed, and Kubernetes is increasingly used for stateful workloads. Not just basic ones but mission-critical workloads, like a company’s primary databases. The promise of workload orchestration in infrastructures with growing complexity is too significant, making Infrastructure Management a crucial aspect of Kubernetes storage evolution

Anyhow, traditional Kubernetes storage solutions relied upon and still rely on old network-attached storage protocols like iSCSI. Released in 2000, iSCSI was built on the SCSI protocol itself, first introduced in the 1980s. Hence, both protocols are inherently designed for spinning disks with much higher seek times and access latencies. According to our modern understanding of low latency and low complexity, they just can’t keep up.

While these solutions worked well for basic containerized applications, they fall short for high-performance workloads like databases, AI/ML training, and real-time analytics. Let’s look at the NVMe standard, particularly NVMe over TCP, which has transformed our thinking about storage in containerized environments, not just Kubernetes.

Why NVMe and Kubernetes Work So Well Together

The beauty of this combination lies in their complementary architectures. The NVMe protocol and command set were designed from the ground up for parallel, low-latency operations–precisely what modern containerized applications demand. When you combine NVMe’s parallelism with Kubernetes’ orchestration capabilities, you get a system that can efficiently distribute I/O-intensive workloads while maintaining microsecond-level latency. Further, comparing NVMe over TCP vs iSCSI, we see significant improvement in terms of IOPS and latency performance when using NVMe/TCP.

Consider a typical database workload on Kubernetes. Traditional storage might introduce latencies of 2-4ms for read operations. With NVMe over TCP, these same operations complete in under 200 microseconds–a 10-20x improvement. This isn’t just about raw speed; it’s about enabling new classes of applications to run effectively in containerized environments.

The Technical Symphony

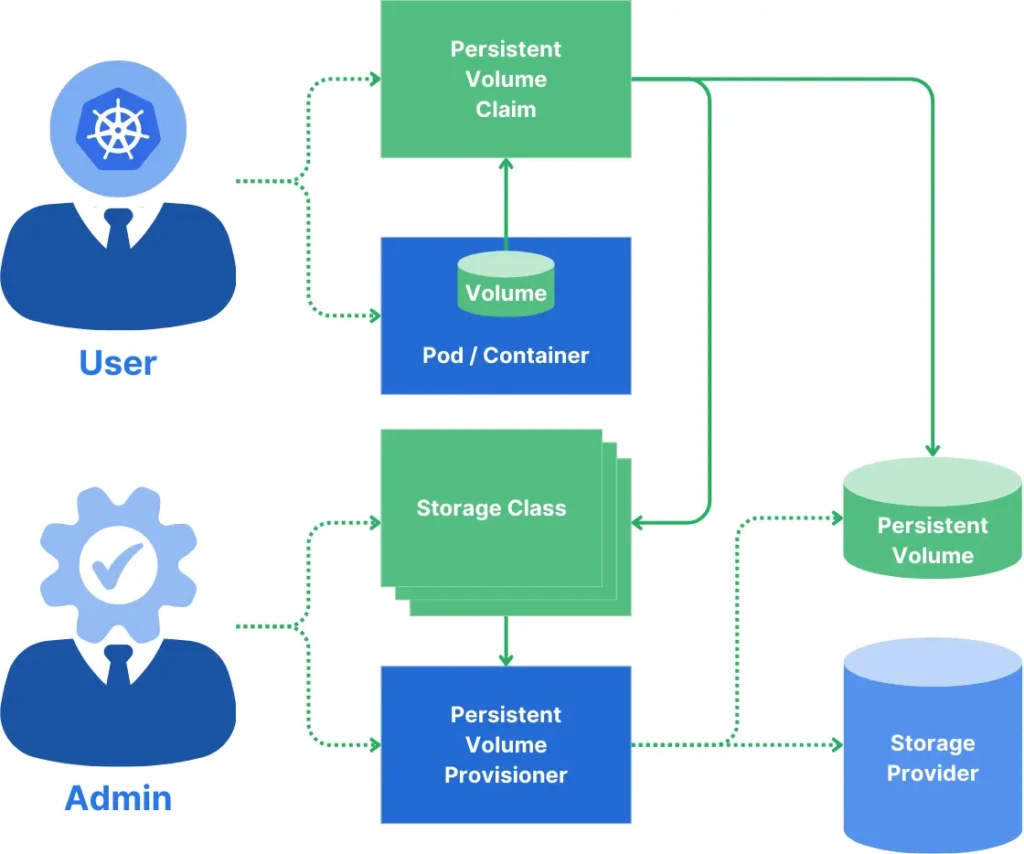

The integration of NVMe with Kubernetes is particularly elegant through persistent volumes and the Container Storage Interface (CSI). Modern storage orchestrators like simplyblock leverage this interface to provide seamless NVMe storage provisioning while maintaining Kubernetes’ declarative model. This means development teams can request high-performance storage using familiar Kubernetes constructs while the underlying system handles the complexity of NVMe management, providing fully reliable shared storage.

The NMVe Impact: A Real-World Example

But what does that mean for actual workloads? Our friends over at Percona found in their MongoDB Performance on Kubernetes report that Kubernetes implies no performance penalty. Hence, we can look at the disks’ actual raw performance.

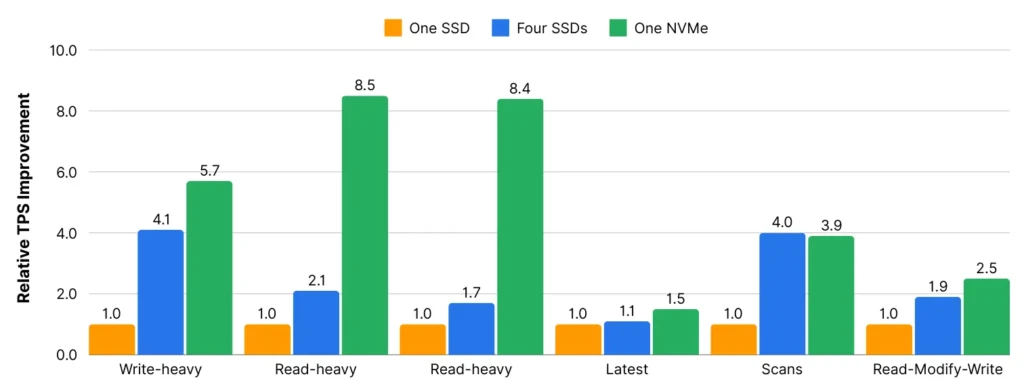

A team of researchers from the University of Southern California, San Jose State University, and Samsung Semiconductor took on the challenge of measuring the implications of NVMe SSDs (over SATA SSD and SATA HDD) for real-world database performance.

The general performance characteristics of their test hardware:

| NVMe SSD | SATA SSD | SATA HDD | |

| Access latency | 113µs | 125µs | 14,295µs |

| Maximum IOPS | 750,000 | 70,000 | 190 |

| Maximum Bandwidth | 3GB/s | 278MB/s | 791KB/s |

Their resume states, “scale-out systems are driving the need for high-performance storage solutions with high available bandwidth and lower access latencies. To address this need, newer standards are being developed exclusively for non-volatile storage devices like SSDs,” and “NVMe’s hardware and software redesign of the storage subsystem translates into real-world benefits.”

They’re closing with some direct comparisons that claim an 8x performance improvement of NVMe-based SSDs compared to a single SATA-based SSD and still a 5x improvement over a [Hardware] RAID-0 of four SATA-based SSDs.

Transforming Database Operations

Perhaps the most compelling use case for NVMe in Kubernetes is database operations. Typical modern databases process queries significantly faster when storage isn’t the bottleneck. This becomes particularly important in microservices architectures where concurrent database requests and high-load scenarios are the norm.

Traditionally, running stateful services in Kubernetes meant accepting significant performance overhead. With NVMe storage, organizations can now run high-performance databases, caches, and messaging systems with performance comparable to Kubernetes running on bare-metal infrastructure.

Dynamic Resource Allocation

One of Kubernetes’ central promises is dynamic resource allocation. That means assigning CPU and memory according to actual application requirements. Furthermore, it also means dynamically allocating storage for stateful workloads. With storage classes, Kubernetes provides the option to assign different types of storage backends to different types of applications. While not strictly necessary, this can be a great application of the “best tool for the job” principle.

That said, for IO-intensive workloads, such as databases, a storage backend providing NVMe storage is essential. NVMe’s ability to handle massive I/O parallelism aligns perfectly with Kubernetes’ scheduling capabilities. Storage resources can be dynamically allocated and deallocated based on workload demands, ensuring optimal resource utilization while maintaining performance guarantees.

Simplified High Availability

The low latency of NVMe over TCP enables new approaches to high availability. Instead of complex database replication schemes, organizations can leverage storage-level replication (or more storage-efficient erasure coding, like in the case of simplyblock) with a negligible performance impact. This significantly simplifies application architecture while improving reliability.

Furthermore, NVMe over TCP utilizes multipathing as an automatic fail-over implementation to protect against network connection issues and sudden connection drops, increasing the high availability of persistent volumes in Kubernetes.

The Physics Behind NVMe Performance

Many teams don’t realize how profoundly storage physics impacts database operations. Traditional storage solutions averaging 2-4ms latency might seem fast, but this translates to a hard limit of about 80 consistent transactions per second, even before considering CPU or network overhead. Each transaction requires multiple storage operations: reading data pages, writing to WAL, updating indexes, and performing one or more fsync() operations. At 3ms per operation, these quickly stack up into significant delays. Many teams spend weeks optimizing queries or adding memory when their real bottleneck is fundamental storage latency.

This is where the NVMe and Kubernetes combination truly shines. With NVMe as your Kubernetes persistent volume storage backend, providing sub-200μs latency, the same database operations can theoretically support over 1,200 transactions per second–a 15x improvement. More importantly, this dramatic reduction in storage latency changes how databases behave under load. Connection pools remain healthy longer, buffer cache decisions become more efficient, and query planners can make better optimization choices. With the storage bottleneck removed, databases can finally operate closer to their theoretical performance limits.

Looking Ahead

The combination of NVMe and Kubernetes is just beginning to show its potential. As more organizations move performance-critical workloads to Kubernetes, we’ll likely see new patterns and use cases that fully take advantage of this powerful combination.

Some areas to watch:

- AI/ML workload optimization through intelligent data placement

- Real-time analytics platforms leveraging NVMe’s parallel access capabilities

- Next-generation database architectures built specifically for NVMe on Kubernetes Persistent Volumes

The marriage of NVMe-based storage and Kubernetes Persistent Volumes represents more than just a performance improvement. It’s a fundamental shift in how we think about storage for containerized environments. Organizations that understand and leverage this combination effectively gain a significant competitive advantage through improved performance, reduced complexity, and better resource utilization.

For a deeper dive into implementing NVMe storage in Kubernetes, visit our guide on optimizing Kubernetes storage performance.

Questions and Answers

NVMe storage offers ultra-low latency and high IOPS, making it perfect for Kubernetes workloads that demand fast and scalable persistent storage. It helps ensure performance even as clusters grow or scale dynamically.

NVMe over TCP allows high-performance NVMe storage to be used across standard Ethernet networks without proprietary fabrics. It brings near-local SSD performance to containerized applications with full Kubernetes support.

Together, NVMe and Kubernetes deliver scalability, speed, and flexibility. NVMe supports high-performance apps, while Kubernetes orchestrates workloads at scale. Solutions like Simplyblock unify both with dynamic provisioning, encryption, and snapshots.

Yes, using NVMe-backed storage with Kubernetes enables reliable, fast stateful services like databases, message queues, and analytics platforms. Simplyblock’s CSI driver ensures seamless integration with these workloads.

Simplyblock simplifies NVMe storage adoption by offering native Kubernetes support, NVMe-over-TCP transport, instant volume provisioning, and encryption at rest. It brings enterprise-grade performance and security to modern container environments.