NVMe over TCP vs Fibre Channel

Terms related to simplyblock

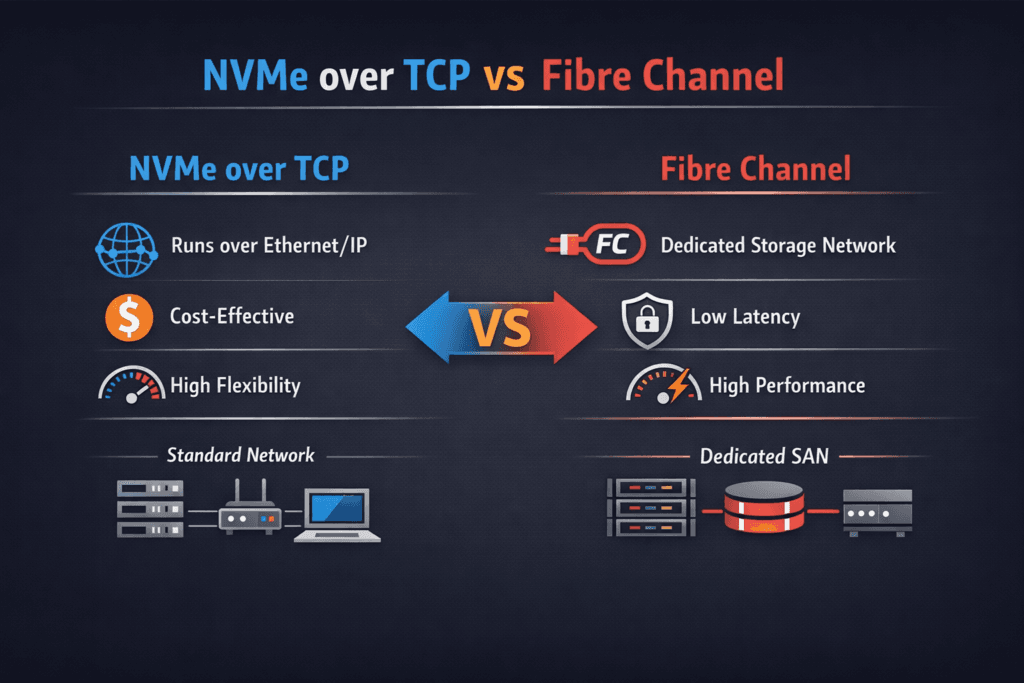

NVMe over TCP vs Fibre Channel compares two ways to carry NVMe-oF block I/O between servers and shared flash. NVMe/TCP runs on standard Ethernet using the TCP/IP stack. Fibre Channel runs on a dedicated SAN fabric that storage teams often manage as its own network. Both can push high IOPS and strong throughput, but they differ in day-to-day operations, scaling, cost drivers, and tail latency under load.

Executives usually focus on risk, change control, and steady application response times. Platform teams focus on fast provisioning, clean failure handling, and simple troubleshooting. The right option is the one your organization can run well every week, not just during a proof of concept.

Why NVMe over TCP vs Fibre Channel changes the storage playbook

Teams often pick a transport and then try to tune around its weak spots. That wastes time and hides the real bottlenecks. Start with the full data path: CPU cycles per I/O, queue depth, network congestion, and workload isolation.

NVMe/TCP fits teams that standardize on Ethernet and automation. Fibre Channel fits teams that already run SAN tools, zoning, and strict change rules. Either choice still needs strong controls for noisy neighbors, because mixed workloads can spike p99 latency faster than they hit a throughput ceiling.

🚀 Reduce p99 Latency When Choosing Between NVMe/TCP and Fibre Channel

Use Simplyblock to isolate tenants with QoS and keep NVMe-oF performance steady at scale.

👉 Use Simplyblock for Kubernetes Storage Performance →

Fabric-backed storage inside Kubernetes Storage

Kubernetes Storage turns storage into a platform issue, not a ticket queue. Developers expect fast volume creation, stable latency, and safe rollouts. SRE and DevOps teams need upgrades that do not break mounts, plus clear metrics when performance drifts.

NVMe/TCP often maps well to Kubernetes because the cluster already uses Ethernet, and teams can reuse the same network playbooks. Fibre Channel can also support Kubernetes, but it may add a second ops lane with different tools and change windows. That split can slow incident response when teams need quick answers.

Software-defined Block Storage helps because it provides one control plane across layouts. Hyper-converged placement favors simple wiring and local paths. Disaggregated placement favors independent scaling of compute and storage. A mixed layout supports tiering without forcing app changes.

NVMe/TCP behavior and what it means for fabric choice

NVMe/TCP uses the host CPU and the network stack, so CPU pressure can raise tail latency. Network noise can also push p99 higher when storage traffic competes with east-west app traffic. Teams limit that risk when they reserve CPU, keep node settings uniform, and enforce network policy.

Fibre Channel keeps storage traffic on its own fabric. That separation can reduce cross-traffic surprises. Many shops also like the guardrails that SAN ops already enforce. The tradeoff shows up in port planning, SAN refresh cycles, and the smaller pool of engineers who can debug the fabric quickly.

Cost and operations in NVMe over TCP vs Fibre Channel

Ethernet-based NVMe/TCP usually benefits from broad hardware choice, shared skills, and common automation. It can also lower the barrier to expansion when you already budget for Ethernet growth. Fibre Channel often carries higher specialization costs, but it can deliver strong process discipline in SAN-first environments.

Operational cost matters as much as capex. Ask who owns the fabric, who owns incident response, and how fast teams can change paths when apps demand more performance. Those answers often decide the outcome before you run a benchmark.

Benchmarking that reflects real workload load

A useful benchmark proves sustained throughput and stable latency. Begin with local NVMe tests to set the upper bound. Then measure the network path using the same block size, job count, and queue depth.

Track p50, p95, and p99 latency along with IOPS and bandwidth. Run tests long enough to reach steady state. Add a contention run where a second workload drives load at the same time, because shared platforms often fail first at tail latency.

Practical tuning to lower jitter and protect p99

Keep tuning simple, and change one variable at a time. Re-run the same fio profile after each change, and compare p99.

- Reserve CPU cores for storage services, and keep IRQ settings consistent across nodes.

- Standardize MTU, queue counts, and congestion settings across the fabric.

- Set queue depth to meet latency goals instead of chasing peak IOPS.

- Apply tenant-aware QoS so batch jobs cannot drown latency-sensitive apps.

Decision score card for NVMe over TCP vs Fibre Channel

The table below highlights the factors that drive cost, speed, and operational load in real environments.

| Decision factor | NVMe/TCP over Ethernet | Fibre Channel for NVMe |

|---|---|---|

| Network domain | Shared IP/Ethernet | Dedicated SAN fabric |

| Scale model | Add NICs and switches | Add ports and fabrics |

| Ops model | IP tools and automation | SAN tools and zoning |

| Kubernetes fit | Often simpler for cluster teams | Strong in SAN-first orgs |

| Tail-latency risk | More sensitive to CPU and congestion | Often steadier with strict SAN rules |

Simplyblock™ for steady latency and clean operations

Simplyblock targets steady performance by tightening the I/O path and enforcing policy close to the data. Its SPDK-based, user-space design aims to cut overhead and keep I/O fast and consistent, which helps NVMe/TCP deployments that fight CPU noise and jitter.

For Kubernetes Storage, simplyblock emphasizes multi-tenancy and QoS so teams can protect priority workloads. Software-defined Block Storage also supports flexible layouts, so teams can run hyper-converged, disaggregated, or mixed clusters without changing the app layer.

What comes next for fabrics and NVMe-oF

Ethernet fabrics will keep gaining speed, and more teams will run storage traffic on the same rails as compute traffic. That shift raises the bar on congestion control and node consistency. Fibre Channel will keep its place in enterprises that value a dedicated storage network and tight governance.

More organizations will also use DPUs and IPUs to offload networking and storage work. That change can improve isolation and reduce CPU jitter, which helps p99 latency in multi-tenant platforms.

Related Terms

Teams often review these alongside NVMe over TCP vs Fibre Channel to align transport choice with Kubernetes Storage targets.

Questions and Answers

If you’re modernizing a SAN and want NVMe semantics over existing Ethernet, NVMe over TCP is often the cleaner path. It avoids FC-specific switching and HBA workflows while still delivering low-latency remote NVMe access. The decision usually comes down to whether you want a dedicated FC fabric or an IP-native approach that scales with standard networking.

Fibre Channel brings a mature storage operational model, but it also adds fabric-specific zoning, tooling, and specialized components. NVMe/TCP typically reduces that by riding on Ethernet/IP and reusing existing network ops, which can simplify rollouts and expansions. For teams comparing lifecycle complexity, it’s helpful to frame it as SAN vs NVMe over TCP rather than a pure latency shootout.

Both are NVMe-oF transports, but their behavior depends on fabric characteristics and the host CPU. FC-NVMe can inherit predictable SAN-style isolation, while NVMe/TCP often wins on deployment speed and cost efficiency on common Ethernet. The most useful comparison is transport-level constraints and tuning, using NVMe-oF plus the NVMe over Fabrics transport comparison.

FC designs often rely on dual fabrics and established multipath patterns, while NVMe/TCP redundancy is usually built on IP networking plus NVMe-oF multipathing. The “gotcha” is that IP fabrics can hide oversubscription and shared-fate links unless you model failure domains explicitly. If you’re planning HA, compare FC-NVMe details in NVMe over FC against Ethernet-based NVMe over TCP architecture.

Treat it as a fabric redesign, not just a protocol swap. Validate p95/p99 under real queue depth, confirm multipath and failover timing, and benchmark with production-like mixes before cutting over. A practical approach is to baseline your environment, then test NVMe/TCP with repeatable profiles using fio NVMe over TCP benchmarking, and align expectations with NVMe over TCP SAN alternative.