Elbencho Storage Benchmark

Terms related to simplyblock

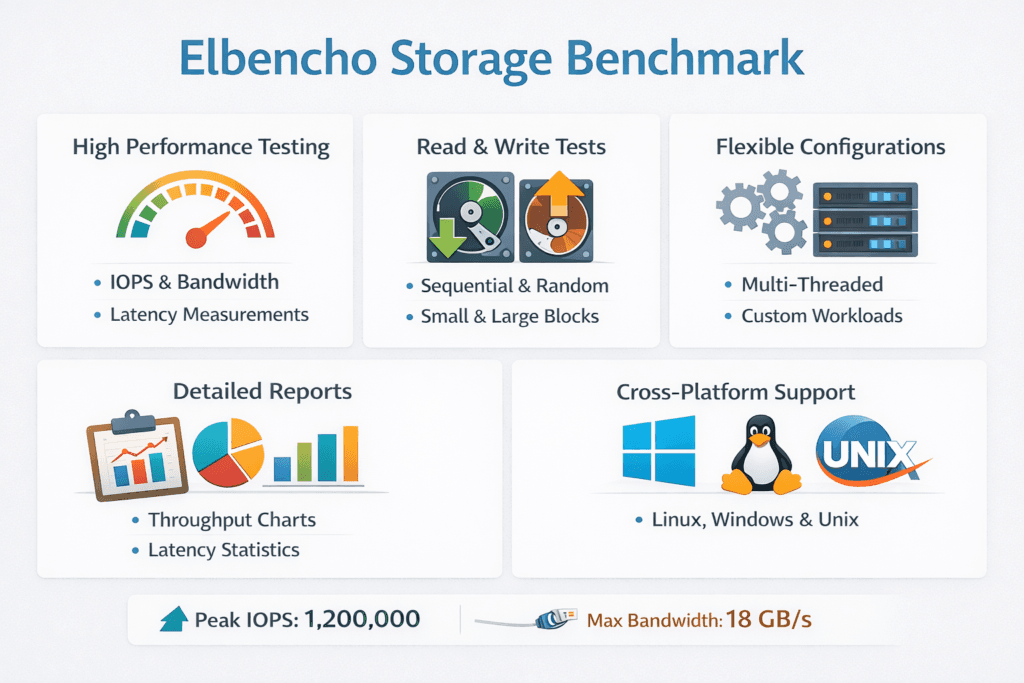

Elbencho Storage Benchmark is a distributed tool that measures IOPS, throughput, and latency across file systems, object stores, and block devices. Teams use it to validate storage claims, catch tail spikes, and compare platforms under real fan-out, not single-host best cases.

elbencho performs well when you test shared storage, multi-client access, or a Kubernetes cluster where multiple pods concurrently access the same volumes. It also helps when you want clean reporting that separates “first finished” from “last finished” work, so you can spot stragglers that hurt p99 latency.

Benchmark Design for Repeatable Results

A benchmark run needs a clear target. Decide what you want to learn, then remove extra variables. Many teams chase peak numbers, then miss the real risk: inconsistent latency under mixed load.

Kubernetes Storage adds its own moving parts. A pod shares CPU with other pods, a node shares NIC queues, and the CSI layer adds volume attach and mount behavior. If you test outside that path, you measure the wrong thing.

Software-defined Block Storage helps here because it standardizes the data plane and the policy layer across nodes. You can keep the same volume policy while changing the cluster shape, hardware, or placement model. That approach keeps results meaningful for budget planning and SLO reviews.

What elbencho measures best

Elbencho gives a strong signal when many clients compete for the same storage service. It highlights slow nodes, uneven load, and end-to-end drift that a single-node test can hide.

🚀 Run Elbencho Against Production-Grade PVCs

Use Simplyblock to reduce CPU overhead and keep NVMe/TCP runs consistent across node pools.

👉 Deploy Simplyblock on Your Cluster →

Elbencho Storage Benchmark in Kubernetes Storage

Run elbencho inside the same Kubernetes Storage path your workloads use. That means you point it at a PVC from the real StorageClass, and you keep pod resources stable.

Set CPU and memory requests and limits so the scheduler does not reshape the run. Use node affinity when you need a fixed client set. Spread clients across nodes when you want a cluster view. Those choices let you answer two different questions: “What does one pod get?” and “What does the platform deliver under fan-out?”

Multi-tenancy often changes the outcome more than storage media does. If one noisy workload floods queues, latency jumps even when average throughput looks fine. Software-defined Block Storage with QoS gives you a way to test fairness, not just raw output.

Quick executive takeaway

If your production apps run in Kubernetes Storage, your benchmark should run there too. Otherwise, your board slide shows numbers that production will never hit.

Elbencho Storage Benchmark and NVMe/TCP

NVMe/TCP matters because it carries NVMe semantics over standard Ethernet. That makes it a common SAN alternative for clusters that want strong performance without specialized fabrics.

NVMe/TCP can shift the bottleneck to CPU and network behavior. When CPU per I/O rises, throughput can stall even if SSDs have room left. Latency can also spread when several clients share the same NIC queues.

Elbencho helps because it stresses the whole path under fan-out. It can show when one node’s CPU limit, IRQ handling, or network drops drag down the cluster. Pair that view with Software-defined Block Storage policies so you can test the same layout with different protection settings, such as replication or erasure coding.

What to watch in NVMe/TCP runs

Track p95 and p99 latency alongside CPU usage on both clients and storage nodes. Those metrics often explain “good averages, bad app behavior.”

Measuring and Benchmarking Elbencho Storage Benchmark Performance

Define the workload shape before you start. Match block size and read/write mix to the app, and keep runtime long enough to reach steady state. Report variance across runs, not a single best number.

Use consistent metrics across your tests: IOPS, throughput, average latency, p95, p99, CPU use, and network errors for NVMe/TCP. Add storage policy details as well, because protection settings can change write cost and tail behavior.

Use this checklist to keep results comparable:

- Fix block size, read/write mix, queue depth, runtime, and a short warm-up window.

- Reserve CPU for benchmark pods, and avoid CPU throttling during the run.

- Choose cache behavior on purpose, then state it clearly in the report.

- Run at least three times, and report the spread, not only the peak.

- Capture network counters for NVMe/TCP, including drops and retransmits.

Ways to Improve the Benchmark Signal and Reduce Noise

Start by validating the I/O path. Confirm whether the PVC uses filesystem mode or raw block mode, and align the test to that mode. Next, confirm client resources. CPU limits can raise latency and make storage look slow when the node actually throttles the benchmark.

Then address contention. Kubernetes Storage needs guardrails when multiple teams share the same platform. QoS, placement rules, and sane tenancy boundaries keep one workload from corrupting everyone else’s results.

Finally, test the layout you plan to run. Hyper-converged setups can reduce hops. Disaggregated storage can improve pool use and simplify upgrades. Both can work, but each shifts how you size NIC bandwidth, CPU, and failure domains for Software-defined Block Storage.

Side-by-Side Differences Table

This table summarizes how storage teams typically position Elbencho alongside other common benchmark tools.

| Category | elbencho | fio |

|---|---|---|

| Best fit | Multi-client, end-to-end delivery under load | Workload shaping and device-level tuning |

| Strong signal | Stragglers, imbalance, and tail drift | Queue depth effects, mix tuning, and latency histograms |

| Typical use in Kubernetes Storage | Cluster-style runs across many pods | Controlled runs on a single PVC |

| Risk if misused | Too many variables per run | Over-trusting single-node best case |

| NVMe/TCP insight | Fan-out bottlenecks across the CPU and the network | Per-job tuning that can miss cluster effects |

Simplyblock™ for Steady Benchmark Outcomes

Simplyblock™ helps teams reduce the drift that shows up between lab runs and production runs. It delivers Software-defined Block Storage built for Kubernetes Storage, with NVMe/TCP support for low-latency Ethernet fabrics.

Simplyblock uses an SPDK-based, user-space, zero-copy data path to reduce kernel overhead in the hot path. That design can improve IOPS per core and keep latency tighter under load, which matters in NVMe/TCP environments where CPU often limits scale.

Multi-tenancy and QoS also matter for benchmarking. Simplyblock lets teams test realistic contention while keeping guardrails in place. That makes Elbencho results more useful for SLO planning, platform sizing, and cost control.

Where Storage Benchmarking Goes Next

Benchmarking keeps shifting toward service behavior. Teams now focus on p99 latency, CPU-per-IO, and fairness under mixed load, not only headline IOPS.

Expect more benchmark runs packaged as Kubernetes-native workflows, with fixed pod specs, fixed placement, and telemetry capture baked in. As NVMe/TCP use grows, teams will also lean on hardware offload, such as DPUs and IPUs, plus user-space stacks that cut CPU cost while keeping throughput high.

Related Terms

Teams often review these glossary pages alongside elbencho Storage Benchmark.

Software-Defined Storage (SDS)

Distributed Storage System

Storage Area Network (SAN)

Infrastructure Processing Unit (IPU)

Questions and Answers

Elbencho is designed to scale load across threads and multiple clients with simple CLI control, so you can push a filesystem or storage backend with realistic parallelism without writing complex job files. It’s often used to expose saturation points and cross-node contention quickly. Compared to fio, it’s less about modeling every I/O knob and more about repeatable, distributed throughput/IOPS testing with clear live stats.

The biggest drivers are block size, number of threads, I/O mode (direct vs buffered), file count, and working-set size. Too-small datasets or buffered I/O can benchmark the page cache instead of storage. For shared filesystems, file layout and per-client concurrency matter as much as raw bandwidth, because metadata and lock contention can dominate. Treat the run as a workload model, not a max-speed contest.

Run elbencho in pods that mount the target PVC and pin pods to the same node pool used by the application. Use direct I/O where possible, ensure dataset size exceeds RAM, and coordinate multiple pods to emulate real client fanout. Also record StorageClass and CSI driver details so results are comparable across clusters. This aligns with storage performance benchmarking best practice.

High aggregate MB/s can coexist with poor p99 latency when queues build under contention or during background work (rebuild, GC, compaction). Elbencho can saturate bandwidth efficiently, which may mask the latency cliff that hurts databases and synchronous workloads. Always pair throughput with latency percentiles and node/target CPU signals to avoid “fast but spiky” configurations. Use p99 storage latency as a decision metric.

Keep the benchmark identical: same client count, threads, block size, dataset size, runtime, and I/O mode, and only change the storage policy you’re evaluating. Report IOPS, MB/s, and p95/p99 latency, plus a short note about the access pattern and concurrency level. If results differ wildly between runs, suspect node noise or cache effects before declaring a backend “faster.” For context, compare with fio storage benchmark runs using the same workload shape.