CSI for Block Storage

Terms related to simplyblock

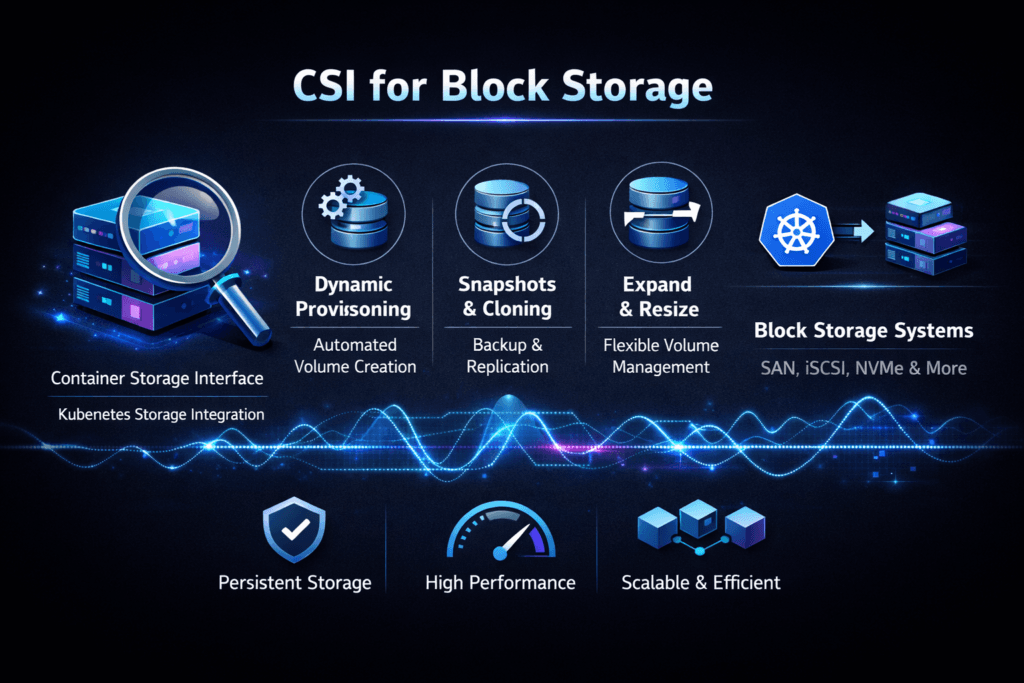

CSI for Block Storage is how Kubernetes provisions, attaches, and mounts block volumes through a CSI driver, instead of relying on legacy, in-tree volume plugins. The CSI driver acts as the contract between Kubernetes and a storage backend, so platform teams can standardize storage operations across clusters and vendors.

Block volumes matter when workloads need stable latency and consistent write behavior. Databases, queues, analytics metadata stores, and VM disks typically behave better on block devices than on object-style APIs. In Kubernetes Storage, CSI becomes the control point for storage lifecycle events such as dynamic provisioning, volume expansion, snapshots, node drains, and rescheduling. When CSI behaves well, stateful rollouts feel routine. When it behaves poorly, attaching delays, retry storms, and “stuck terminating” pods become daily work.

Software-defined Block Storage raises the bar further because the “disk” a pod sees may come from remote NVMe media, with policy controls layered on top for multi-tenancy and QoS.

Running CSI for Block Storage at scale in production

CSI success at scale depends on two paths that must work together. The control path covers provisioning, attaching, detaching, resizing, and snapshots. The data path carries reads and writes once the node publishes the device to the pod. Many outages start in the control path, while many performance issues live in the data path.

A reliable CSI setup uses clear StorageClass tiers, stable node plugins, and strong idempotency in the driver so retries do not multiply failures. It also needs clean observability. If you cannot see provision times, attach times, and error rates, you will guess during incidents. When you run multi-tenant clusters, you also need guardrails so one namespace cannot create a backlog of volume operations that slows everyone else.

🚀 Make topology-aware CSI behavior the default for production Kubernetes

Use Simplyblock to align volume placement with scheduling so stateful pods start cleanly.

👉 Learn more about CSI in Kubernetes →

CSI for Block Storage in Kubernetes Storage

CSI touches scheduling behavior more than many teams expect. StorageClass parameters, topology constraints, and access modes influence where pods can run and how quickly they start. A topology mismatch can delay scheduling. A slow attach can delay readiness. A noisy control plane can turn a rolling restart into a cluster-wide slowdown.

Three operational moments reveal CSI quality fast. Node drains test, detach and reattach behavior. Autoscaling tests how the platform handles churn. StatefulSet rollouts test repeatability because they trigger predictable PVC and pod lifecycles. When these moments stay smooth, Kubernetes Storage teams can focus on application work instead of storage cleanup.

For Software-defined Block Storage, pair CSI lifecycle stability with per-tenant performance controls. Otherwise, even a “correct” CSI deployment can still deliver unstable p99 latency during mixed workload bursts.

CSI for Block Storage and NVMe/TCP

NVMe/TCP influences CSI outcomes by shaping what happens after the volume becomes available. CSI decides when the device appears. NVMe/TCP decides how the device performs under load. If the data path runs hot on the CPU, tail latency will climb even when the capacity looks fine.

NVMe/TCP also changes how teams plan scaling. You often scale performance by adding initiators, increasing paths, and reserving CPU headroom on nodes, rather than pushing depth or buffering. When you combine NVMe/TCP with Kubernetes Storage, you want a backend that keeps CPU efficiency high and supports policy controls, so Software-defined Block Storage stays consistent across tenants.

Proving performance and lifecycle stability

Measure CSI in two categories. First, measure lifecycle timing: provision duration, attach duration, mount duration, and variance during node drains and rolling upgrades. Second, measure runtime I/O: IOPS, throughput, and p95 and p99 latency under realistic block sizes and read/write mixes.

Run tests in conditions that resemble production. Use the same node types, the same CPU limits, the same network policies, and the same StorageClasses. Repeat tests while the cluster performs background work, because real clusters rarely sit idle. When lifecycle times swing widely, fix the control path. When latency rises sharply as load increases, investigate CPU saturation, network contention, or backend efficiency.

Operational techniques that reduce incidents and p99 spikes

Most teams improve outcomes by tightening both lifecycle behavior and runtime isolation. Use this single checklist as your starting point:

- Standardize StorageClass tiers per workload type, and keep latency-first and throughput-first classes separate.

- Enforce topology rules so volumes land where pods can use them without cross-zone surprises.

- Tune timeouts and retries to avoid feedback loops during transient failures.

- Apply QoS and tenant isolation so one namespace cannot dominate shared performance.

- Reserve CPU headroom for NVMe/TCP paths, and avoid designs that waste cycles in the data plane.

CSI-backed block volume options compared

CSI choices differ by operational fit, performance control, and how well they behave during churn. The table below frames the common approaches.

| Approach | What it optimizes | Typical trade-off | Best fit |

|---|---|---|---|

| Legacy in-tree plugins | Simplicity in older clusters | Limited feature growth and migration pressure | Transitional environments |

| Generic CSI with external arrays | Broad compatibility | Mixed attach behavior and uneven observability | Mixed vendor estates |

| Kubernetes-native Software-defined Block Storage | Policy, automation, and cluster-level control | Requires platform discipline | Multi-tenant production |

| NVMe/TCP-backed block with QoS | Low latency and predictable scaling | Needs CPU and network planning | IO-intensive stateful apps |

Why Simplyblock™ keeps CSI stable under load

Simplyblock™ focuses on two outcomes Kubernetes teams care about: consistent lifecycle behavior and stable performance once the volume goes live. On the lifecycle side, that means predictable provisioning and attachment during churn, upgrades, and node maintenance. On the data path side, simplyblock targets an NVMe-first design with NVMe/TCP support, plus multi-tenancy and QoS so mixed workloads do not rewrite each other’s latency profile.

That combination helps platform teams run Kubernetes Storage as a service with clear expectations, instead of treating storage as a per-namespace science project.

Where CSI is headed next

CSI keeps moving toward richer topology signals, tighter integration with workload placement, and clearer health reporting for automated recovery. At the same time, operators increasingly manage storage against SLOs, not “best effort” capacity pools. Expect more automation around placement, faster remediation workflows, and stronger guardrails for multi-tenant fairness.

On the transport side, NVMe/TCP adoption will keep pushing CPU efficiency and observability into the spotlight, because those factors decide whether Software-defined Block Storage stays steady under real cluster behavior.

Related Terms

Often reviewed with CSI for Block Storage in Kubernetes Storage and Software-defined Block Storage.

Questions and Answers

CSI for block storage turns a PVC request into a real block volume, then wires it through controller-side provisioning/attach and node-side staging/publish so kubelet can hand the pod either a mounted filesystem or a raw device. The critical detail is that “provisioned” doesn’t mean “usable in a pod” until the node plugin completes staging and publishing for the scheduled node. Block Storage CSI covers that end-to-end mapping.

In Filesystem mode, CSI formats (or validates) a filesystem and mounts it into the pod, so apps do file I/O and the kernel handles metadata and caching. In Block mode, the pod gets a raw device, and the app controls layout, alignment, and I/O patterns directly. The mode choice changes performance behavior, failure modes, and troubleshooting signals (mount errors vs device errors). See Kubernetes Volume Mode (Filesystem vs Block).

Raw block is a good fit when the application wants direct device control, like databases managing their own block layout or when you need minimal filesystem overhead and predictable latency. It also helps when you need strict alignment, custom caching, or app-level replication above the volume. The tradeoff is more responsibility in the workload for formatting, integrity checks, and safe teardown after crashes. Kubernetes Raw Block Volume Support explains the pattern.

Provisioning failures usually come from backend credentials, quotas, or class parameters, and show up as PVCs that never bind. Attach issues often appear as repeated controller retries or node selection/topology mismatches. Staging/publish failures are typically node-local problems: missing device paths, filesystem corruption, permissions, or “device busy” cleanup drift after restarts. Mapping the error to the lifecycle phase is the fastest way to pick the right logs and events to inspect.

Benchmark with the same volume mode, block size, and concurrency your workload uses, because “great IOPS” can hide tail-latency spikes once queues build. For networked block storage, separate storage limits from node CPU/IRQ limits and transport overhead by watching p95/p99 latency alongside throughput. Also, confirm you’re measuring the mounted path (filesystem) or the device path (raw block) you’ll use in production; otherwise, the datapath can be materially different.