CSI vs In-Tree Storage Plugins

Terms related to simplyblock

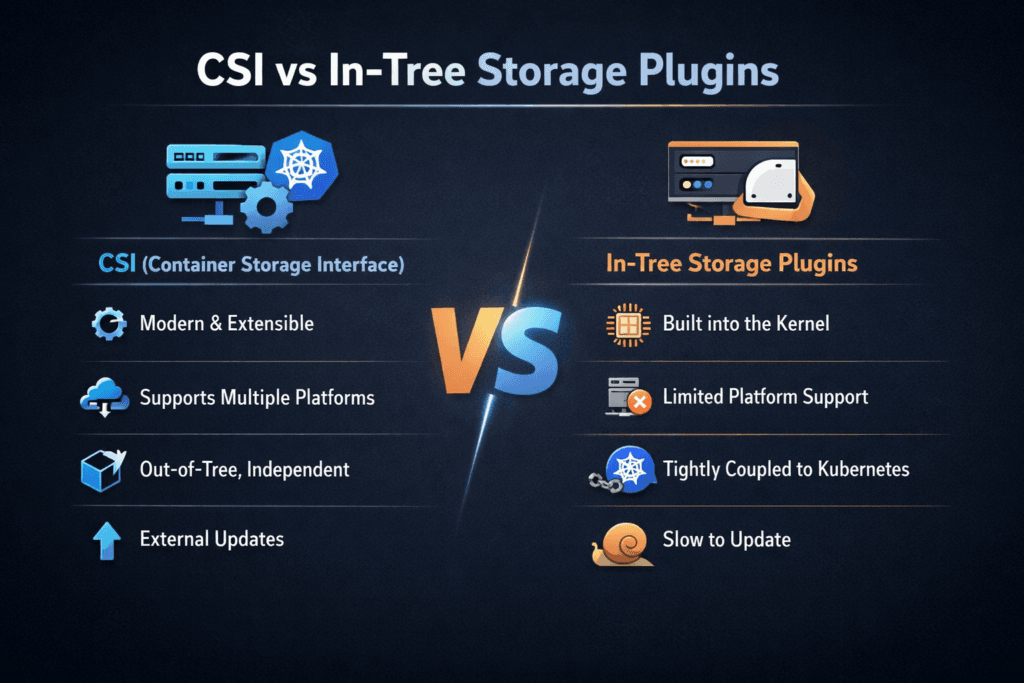

CSI vs In-Tree Storage Plugins compares two ways Kubernetes connects workloads to persistent storage. In-tree plugins ship inside Kubernetes itself. CSI (Container Storage Interface) uses external drivers that Kubernetes calls through a standardized interface. The choice affects upgrade safety, day-two operations, and how consistently stateful apps behave under load.

In-tree plugins can feel convenient because they are “built-in,” but they also couple storage behavior to the Kubernetes release cycle. CSI reduces that coupling by moving the storage integration out of the core. Platform leaders typically prefer CSI because it lowers upgrade risk and expands operational tooling. SRE and DevOps teams prefer it because controller and node components provide clearer troubleshooting signals. In production Kubernetes Storage, teams also want volume-level policy and QoS from Software-defined Block Storage, especially when shared clusters host databases and mixed workloads. NVMe/TCP often enters the picture when organizations need high throughput over standard Ethernet while keeping a consistent operations model.

Why teams move off in-tree storage paths

Most organizations standardize on CSI because it matches how Kubernetes platforms run in real life: frequent upgrades, node churn, and multiple clusters with different lifecycles. CSI drivers can ship features and fixes without waiting for a Kubernetes core release, which shortens time-to-remediation when something breaks in production.

In-tree plugins still appear in older clusters, legacy distributions, or environments mid-migration. They can work, but the coupling shows up during upgrades and during advanced workflows. Teams often hit limits around snapshots, cloning, resizing behavior, topology alignment, and policy enforcement. CSI reduces those gaps when the driver and backend support the capability set.

🚀 Standardize CSI Operations for Stateful Apps Across Clusters

Use Simplyblock to manage provisioning, attach/mount behavior, and QoS controls through a Software-defined Block Storage layer.

👉 Use Simplyblock for Multi-Tenancy and QoS →

Operational Impact on Kubernetes Storage

In Kubernetes Storage, the control plane and node path matter as much as raw media speed. CSI separates responsibilities into controller-side services (provisioning, snapshots, resizing, and publish operations) and node-side services (attach, mount, and staging logic). That separation gives platform teams explicit places to monitor failures and performance drift.

In-tree plugins run through code integrated into Kubernetes binaries. A cluster upgrade can shift storage behavior in ways that are difficult to isolate. Stateful workloads tend to show the impact first because they depend on consistent attach and mount flows. CSI keeps the interface stable while the driver evolves, which lowers the blast radius of Kubernetes upgrades and helps teams standardize operations across clusters.

For database and platform owners, the key question is not “Does it attach?” but “Does it attach fast, repeatedly, under node churn?” CSI provides clearer lifecycle telemetry, while a storage backend with QoS can protect latency-sensitive workloads from noisy-neighbor effects.

Transport choices, NVMe/TCP, and the data path

CSI defines the integration contract, but the transport and datapath set the performance ceiling and tail behavior. NVMe/TCP can deliver strong throughput on standard Ethernet, and it fits both hyper-converged and disaggregated layouts. Disaggregated layouts often match Kubernetes growth patterns because compute and storage scale independently.

Efficiency becomes a gating factor at scale. When the datapath consumes too much CPU per I/O, jitter rises during bursts, and p99 latency climbs. Teams often pair CSI with storage engines built around SPDK-style user-space concepts to reduce copies and context switches. That design choice matters most in shared clusters where many workloads contend for CPU time and network queues.

How to benchmark and monitor storage integration behavior

Benchmarking should validate steady-state I/O and lifecycle timing. Steady-state metrics include IOPS, bandwidth, average latency, and p95/p99 latency at the volume and workload layer. Lifecycle timing includes time-to-provision, time-to-attach, time-to-mount, and time-to-recover after rescheduling.

A useful test plan includes two tracks. One track measures a single workload under stable queue depth. The other track adds interference that reflects production, such as backup traffic, compaction, or scan-heavy analytics. When the platform is healthy, the noisy workload should not collapse latency targets for the latency-sensitive workload. This style of testing also surfaces whether in-tree behavior changes across Kubernetes upgrades.

Migration and tuning moves that improve outcomes

Most improvements come from reducing variability, tightening controls, and treating lifecycle timing as an SLO.

- Standardize StorageClasses and map them to workload intent, such as “database,” “general,” and “backup.”

- Enforce volume-level QoS in the Software-defined Block Storage layer so priority workloads keep their latency budget.

- Validate attach and mount timing under node churn, and alert on drift before incidents hit production.

- Use topology-aware placement where it matters, and avoid cross-zone or cross-rack penalties for latency-sensitive tiers.

- Separate background jobs from OLTP tiers using policy, scheduling, and storage pool design.

CSI and in-tree integration patterns compared

The table below summarizes differences that tend to show up after a few upgrade cycles and real incident response.

| Capability Area | In-Tree Plugins | CSI Drivers |

|---|---|---|

| Upgrade coupling | Tied to Kubernetes releases | Driver evolves independently |

| Lifecycle operations | Limited, varies by plugin | Harder to isolate the root cause |

| Observability and troubleshooting | Harder to isolate root cause | Clear controller and node paths |

| Policy and QoS integration | Often minimal | Stronger alignment with SDS backends |

| Multi-cluster portability | Lower | Higher |

Storage governance with Simplyblock™

Simplyblock™ supports CSI-based Kubernetes deployments with an NVMe-first architecture that fits hyper-converged, disaggregated, and hybrid models. With NVMe/TCP, simplyblock can scale on standard Ethernet while maintaining consistent operational behavior across clusters. For platform teams, the differentiator is governance: QoS, multi-tenancy, and policy-driven volume lifecycle operations aligned with how production Kubernetes Storage runs.

Because simplyblock is Software-defined Block Storage, it can apply per-volume controls that reduce noisy-neighbor risk in shared clusters. An SPDK-based user-space datapath also improves CPU efficiency, which helps stabilize tail latency when clusters run dense node packing or high queue depth.

Where the ecosystem is heading

Kubernetes distributions and tooling keep pushing CSI as the standard integration path, while legacy in-tree paths receive less investment over time.

Platform teams are also asking for stronger automation around lifecycle operations, clearer upgrade posture, and tighter alignment between workload intent and storage policy. Hardware offload adoption is rising as well, with DPUs and IPUs helping reduce host CPU burn and enforce isolation earlier in the datapath.

Related Terms

Teams often review these glossary pages alongside CSI vs In-Tree Storage Plugins.

- CSI Topology Awareness

- CSI Volume Lifecycle

- CSI Performance Overhead

- Block Storage for Stateful Kubernetes Workloads

Questions and Answers

In-tree storage plugins are built into Kubernetes core, so new features and bug fixes often have to wait for Kubernetes releases. With CSI, storage drivers move out of the core, letting vendors ship updates on their own, add features faster, and keep the core simpler. It also uses one common way to handle snapshots, volume growth, and topology, which used to differ across in-tree plugins. Today, CSI is the standard replacement model.

CSI vs in-tree plugins: how does the volume lifecycle differ, and how does troubleshooting change?

With in-tree plugins, many operations were executed by Kubernetes core components, so failures often required deep kube-controller-manager or kubelet debugging. With CSI, the control-plane and node responsibilities are pushed into driver components and sidecars, making logs and failure domains clearer. When a PVC hangs, you usually inspect controller + sidecars; when a mount fails, you focus on node plugin + kubelet events. This separation is captured in the CSI architecture.

Kubernetes introduced CSI migration so existing in-tree PVs can be “translated” to CSI drivers while keeping the same PVC/PV objects and app semantics. In practice, the cluster gradually switches provisioning and attach/mount handling to CSI, but you must ensure the CSI driver is installed, supports migration for that provider, and that feature gates/settings match your Kubernetes version. Plan upgrades around storage operations because migration issues typically surface during attach/mount churn.

CSI defines explicit RPCs, and Kubernetes uses standard CRDs and controllers for snapshots and expansion workflows. In-tree plugins historically had uneven support and different behaviors per provider, especially for online expansion and snapshot semantics. CSI’s sidecar model also makes capabilities discoverable and versioned, so clusters can reason about what a driver supports instead of relying on provider-specific logic. See CSI Snapshot Architecture for the snapshot side.

You’ll still see in-tree behavior in older clusters, legacy managed Kubernetes defaults, or long-lived PVs created before CSI migration was enabled. The main risks are slower feature adoption, harder debugging, and tighter coupling to Kubernetes upgrades. The practical approach is to standardize new provisioning on CSI and migrate legacy PVs in a controlled window with validation tests that include node drains, rolling restarts, and restore-from-snapshot workflows.