Erasure Coding Rebuild Performance

Terms related to simplyblock

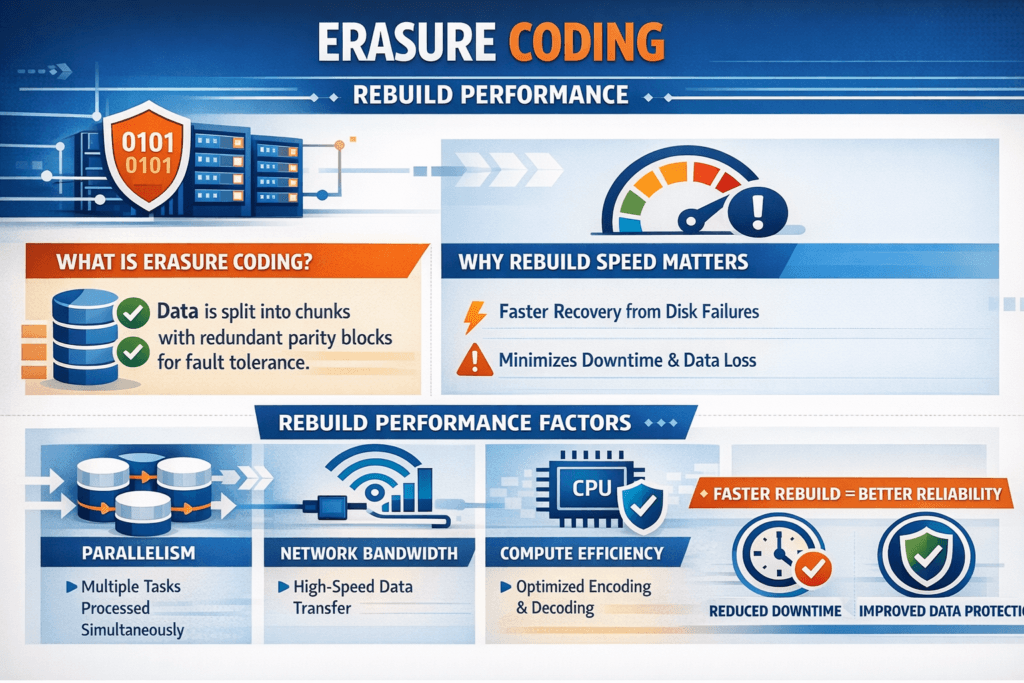

Erasure Coding Rebuild Performance describes how fast a storage system restores full protection after a drive, node, or network path fails, and how well it keeps application I/O steady during repair. With erasure coding, the system splits data into fragments, adds parity, and spreads those fragments across nodes. When a fragment goes missing, the system reads the remaining fragments, rebuilds the missing piece with parity math, and writes a replacement fragment to healthy capacity.

This metric matters because degraded mode raises risk. It also shapes user experience because rebuild traffic competes with foreground reads and writes. A strong design enables rapid repairs and keeps tail latency under control across databases, analytics, and messaging platforms.

Exec teams usually want two numbers: time to return to full protection, and the p99 latency change during the event. Ops teams also want to know whether the platform can enforce limits at the pool, volume, and tenant levels.

Tuning Rebuild Behavior in Software-defined Block Storage

In Software-defined Block Storage, rebuild work should follow policy, not luck. Teams get better outcomes when they allocate a clear “repair budget” and protect foreground I/O first. That approach helps a SAN alternative strategy because it keeps service levels stable on standard servers instead of relying on big controllers.

Placement and layout choices also change rebuild outcomes. Wide striping can speed repair by pulling fragments from many sources, but bad placement can overload a rack link or a single node. Topology-aware placement spreads reads across failure domains and reduces cross-rack traffic. CPU efficiency plays a big role as well because parity math burns cycles, especially in write-heavy periods. SPDK-style user-space I/O can free CPU time by reducing kernel overhead and copy paths, which helps rebuild and workloads at the same time.

🚀 Plan Erasure Coding Capacity, Then Keep Rebuild Time Under Control

Use Simplyblock to optimize protection settings and keep rebuild performance consistent in production.

👉 Use Simplyblock Erasure Coding Tools →

Erasure Coding Rebuild Performance in Kubernetes Storage

In Kubernetes Storage, repair events often overlap with routine operations. A node drain, a rolling update, or autoscaling can move pods while the storage layer repairs fragments. That overlap can push latency up unless the platform honors workload priority and keeps rebuild load within limits.

Deployment style matters, too. Hyper-converged storage can cut hops for some reads and writes. Disaggregated storage can improve failure isolation and let teams scale storage without scaling compute. Many enterprises use a mixed model across bare-metal clusters and virtualized pools, so they need consistent rebuild controls in every environment. When the storage platform enforces QoS during degraded mode, stateful services keep serving traffic while the system repairs protection in the background.

Erasure Coding Rebuild Performance and NVMe/TCP

NVMe/TCP changes rebuild dynamics because it delivers high throughput on standard Ethernet. Rebuild workflows run many parallel reads, and they benefit from a transport that scales across nodes without special fabric gear. Teams can keep operations consistent across racks and sites while they still use fast NVMe media.

Even with NVMe, rebuild can hit CPU or network limits first. Parity math, checksums, and copy paths can eat cores, and east-west traffic can saturate links. A data path that uses zero-copy and efficient queues can move more data per core, which reduces rebuild time and protects application latency. Some fleets also keep a path to NVMe-oF upgrades, including RDMA tiers for the most latency-sensitive volumes, while they keep the broader pool on NVMe/TCP.

Measuring and Benchmarking Erasure Coding Rebuild Performance

A useful benchmark captures both recovery speed and user impact. Track time-to-heal, then track how far p95 and p99 move during repair. Use a workload that matches production and run it long enough to stabilize before you inject a failure.

A repeatable test uses a fixed workload profile, a controlled fault, and consistent data volume. Run steady reads and writes, trigger a single drive loss or node loss, and measure rebuild read bandwidth, rebuild write bandwidth, CPU use, and network saturation while the workload continues. Collect queue depth and per-volume latency so you can spot hotspots and noisy neighbors.

For leadership reporting, keep the scorecard tight: time in degraded mode, p99 delta, and rebuild throughput per TB. Those numbers map to risk, customer impact, and capacity planning.

Approaches for Improving Rebuild Outcomes at Scale

Most gains come from reducing contention and avoiding hotspots, not from running rebuild at max speed all the time.

- Cap rebuild bandwidth per pool and per tenant, and keep foreground I/O at a higher priority.

- Spread fragments across real failure domains, such as nodes, racks, and zones, to avoid concentrated rebuild reads.

- Add safe parallelism by pulling from more sources while watching top-of-rack congestion.

- Choose stripe width and parity levels that fit your CPU and network budget, not just capacity targets.

- Enforce QoS so a single workload cannot steal IOPS during degraded-mode operation.

Rebuild Performance Differences Across Storage Designs

Different protection methods are built in very different ways, so your expectations should match the design you choose.

| Data Protection Method | Capacity Overhead | Repair Workflow | Typical Tail-Latency Impact | Best Fit |

|---|---|---|---|---|

| 3× Replication | High | Copy full blocks | Moderate, bandwidth-driven | Hot tiers, smaller clusters |

| RAID-6 (single system) | Medium | Controller-led rebuild | Can spike under load | Traditional arrays |

| Distributed Erasure Coding | Lower | Network + CPU reconstruction | Low with strong QoS | Scale-out, SAN alternative |

| Hybrid (replicas + erasure coding) | Mixed | Fast hot-tier repair, efficient capacity tier | Often steady with tier rules | Mixed fleets |

Simplyblock™ Controls for Rebuild SLOs

Simplyblock focuses on keeping rebuild behavior controlled during failures, especially in Kubernetes Storage fleets where disruptions happen during normal work. Simplyblock uses an SPDK-based user-space design to reduce overhead and keep queues efficient under pressure. That efficiency helps the platform sustain rebuild throughput without starving applications.

The platform also targets the controls teams need during degraded mode: multi-tenancy, QoS, and consistent policy boundaries across pools and volumes. This model supports Software-defined Block Storage on commodity servers, including baremetal, while it also supports NVMe/TCP and NVMe/RoCEv2 where you need it. For organizations planning DPU or IPU adoption, a user-space, zero-copy architecture also aligns well with offload strategies.

Future Directions and Advancements in Faster Rebuilds

Rebuild methods continue to move toward lower read amplification and better locality. Declustered layouts, smarter fragment placement, and repair-aware scheduling can shorten degraded windows by spreading work across more nodes and links. Expect tighter feedback loops as well, where the system tunes the rebuild rate based on live latency and congestion signals.

Hardware trends will push this further. Faster NVMe helps, but CPU efficiency and network balance decide real outcomes at scale. DPUs and SmartNICs can also offload parts of the data path, which keeps the host CPU available for applications during repair events.

Related Terms

Teams often review these glossary pages alongside Erasure Coding Rebuild Performance.

Storage Pools

Storage Controller

Tail Latency

RADOS Block Device

Questions and Answers

Erasure coding rebuild speed is driven by how fast the system can read surviving fragments, decode parity, and write reconstructed shards without starving foreground I/O. The limiting factor is usually a mix of network bandwidth, target CPU for encoding/decoding, and per-node disk queueing. The chosen erasure coding scheme (k+m) also sets how many peers must participate per repair.

During rebuild, the cluster generates extra background reads and writes that compete with application traffic, so queues grow, and tail latency spikes first. This effect is amplified when small random writes trigger additional internal work and when degraded reads need more fragments to reconstruct data. Watch for rising p99 with flat throughput as a sign you’re hitting read amplification and shared resource contention, not “slower media.”

Higher parity (larger m) improves fault tolerance but typically increases compute and cross-node reads required to rebuild, especially when multiple shards are missing or the cluster is busy. Stripe sizing also matters: it shapes how much data must be scanned and how efficiently decoding work is batched. If rebuilds are too slow, you often need more headroom (CPU/network) rather than simply changing the code.

Throttle rebuild bandwidth to protect workload latency, but keep it high enough to meet your target repair window so a second failure doesn’t exceed your redundancy. A common approach is reserving fixed “repair headroom” (CPU, bandwidth, IOPS) that rebuild traffic can consume, then enforcing a ceiling during peak hours. This is the design intent behind high availability block storage design, where repair can’t starve foreground I/O.

Test rebuild while running a representative production workload mix, because idle-cluster rebuild numbers usually overestimate real performance. Induce a controlled shard loss, measure time-to-redundancy, and track p95/p99 latency, network utilization, CPU, and per-node queues during repair. If rebuild time varies widely between runs, you’re likely sensitive to hot spots, placement, or insufficient headroom under concurrency.