Storage Performance Isolation

Terms related to simplyblock

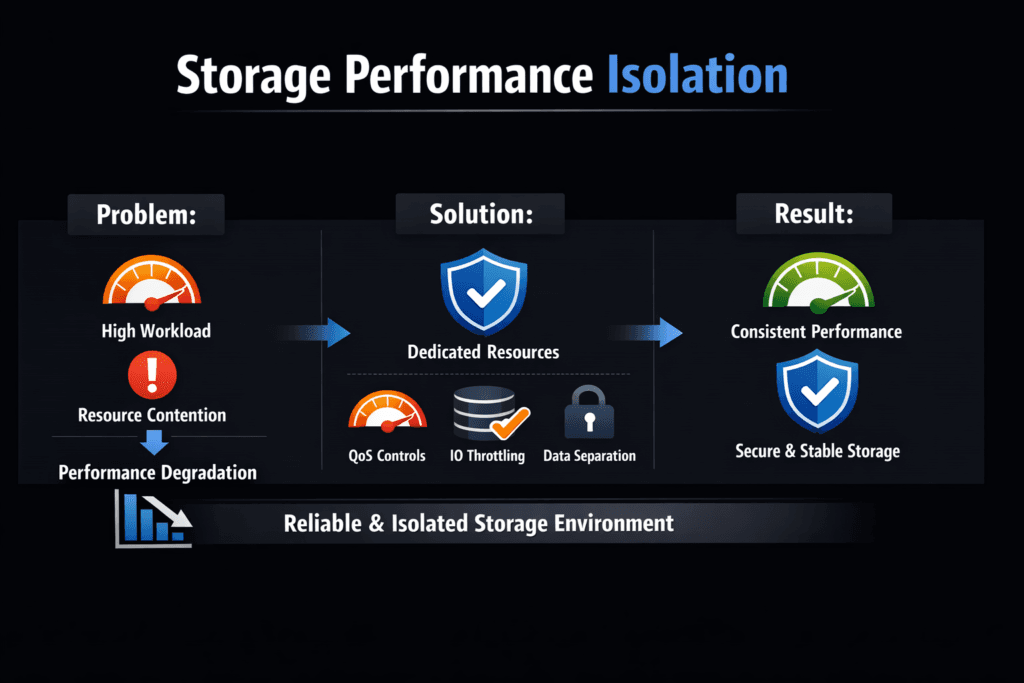

Storage Performance Isolation is the ability to keep one workload’s storage behavior from degrading another workload’s latency, IOPS, or throughput when they share the same media, CPU cycles, and network paths.

What breaks isolation in real systems? Queue contention, uneven CPU scheduling, interrupt storms, background rebuild or scrub traffic, cache eviction, and bursty east–west traffic often create “noisy neighbor” effects. Even when raw NVMe bandwidth looks high, tail latency can still spike, and that is what users notice first.

Why do executives care? Isolation protects revenue-impacting SLOs, reduces incident volume, and lets teams run higher utilization without turning shared infrastructure into an internal ticket factory. It also supports a SAN alternative strategy where pooled NVMe can replace legacy arrays while keeping firm controls on multi-tenant risk.

Optimizing Isolation with Current Platform Controls

Strong isolation starts with a clear policy model. Teams should decide which workloads need tight latency bounds, which can tolerate best-effort behavior, and which should never co-locate. Then they should apply limits at multiple layers because one control rarely fixes the full path.

At the node layer, Linux I/O controls and scheduler choices can reduce starvation, but they cannot fully isolate remote block storage once traffic fans out across shared NIC queues and storage targets. At the storage layer, per-volume QoS with admission control and throttling provides stronger guarantees because it governs the resource that actually saturates under load.

🚀 Enforce Storage QoS and Stop Noisy Neighbors in Kubernetes

Use Simplyblock to apply per-volume limits and keep NVMe/TCP latency steady at scale.

👉 Use Simplyblock for Multi-Tenancy & QoS →

Storage Performance Isolation in Kubernetes Storage

Kubernetes Storage adds two pressures: fast churn and dense consolidation. Pods start, stop, reschedule, and autoscale, which changes I/O patterns by the hour. Namespaces and quotas help teams segment capacity, but performance isolation needs more than capacity limits.

Teams typically get better outcomes when they align StorageClasses to intent (latency-sensitive, general purpose, bulk), enforce per-tenant performance caps, and separate “hot” volumes from mixed pools. For database platforms and internal DBaaS, the platform should also map tenant identity to storage policy so limits follow the workload across nodes and zones.

Storage Performance Isolation and NVMe/TCP

NVMe/TCP helps because it brings NVMe-oF semantics over standard Ethernet, with scalable queueing and parallelism that suits disaggregated storage and mixed Kubernetes deployments. Still, NVMe/TCP does not remove contention by itself.

The key is to keep the datapath efficient and consistent. CPU scheduling jitter, buffer pressure, and cross-tenant bursts can still amplify tail latency. A user-space, polled-mode datapath (SPDK-based) can reduce overhead and jitter by avoiding kernel-heavy copy paths, which makes NVMe/TCP behave more like local NVMe at scale, especially on bare-metal clusters and high-IOPS services.

Measuring and Benchmarking Isolation Performance

Isolation is not a single metric. Teams should measure how performance changes when a second workload (or tenant) increases load, then quantify the impact on p95 and p99 latency, not only averages.

Synthetic tests help you map ceilings, while application tests reveal real queue behavior, WAL bursts, compaction cycles, and retry storms. Use both, and keep the test harness stable across runs.

- Define a “victim” workload with a fixed latency target, then add an “aggressor” workload that ramps I/O and bandwidth in steps.

- Track p50, p95, and p99 latency, plus IOPS, throughput, and CPU per I/O on both sides.

- Hold the storage topology constant (same nodes, same volume placement), and change one variable at a time.

- Repeat tests during background events (rebuild, snapshotting, replication) to see worst-case behavior.

- Report deltas: “aggressor at X caused victim p99 to rise by Y%,” which makes risk visible to stakeholders.

Approaches for Improving Isolation Performance

Most teams combine controls across compute, network, and storage:

First, isolate at the storage layer with per-volume QoS limits for IOPS and bandwidth, plus burst rules that prevent short spikes from turning into long tail events. Second, shape network traffic so storage flows do not fight with east–west service traffic. Third, reserve CPU headroom for the storage datapath and CSI components, because starving the datapath often looks like “storage latency,” even when media sits idle.

For multi-tenant environments, policy enforcement must attach to tenant identity. That includes hard caps, fair sharing, priority tiers, and guardrails during maintenance events, such as rebuilds and rebalancing. When the platform supports both hyper-converged storage and disaggregated storage, the same isolation model should apply in both modes so teams can mix architectures without changing operating rules.

Side-by-Side Comparison of Isolation Strategies

The table below summarizes common isolation mechanisms and where they fit best.

| Isolation strategy | Where it acts | Strength | Limitation | Best fit |

|---|---|---|---|---|

| Node-level controls (Linux scheduler, cgroups) | Per host | Easy to deploy, good for local contention | Weaker for remote paths and shared targets | Small clusters, single-tenant nodes |

| Network shaping (QoS, rate limits) | Per link / fabric | Protects shared NICs and uplinks | Cannot control backend media contention | Disaggregated storage over shared Ethernet |

| Storage-side QoS (per-volume limits, fair sharing) | At the storage service | Dedicated pools/isolation domains | Requires a platform that enforces policy in the datapath | Multi-tenant Kubernetes Storage, DBaaS |

| Dedicated pools / isolation domains | Topology-level | Hard isolation, simple to reason about | Lower utilization, higher cost | Regulated workloads, strict latency tiers |

Simplyblock™ for Multi-Tenant Control

Simplyblock is built for Software-defined Block Storage with a fast SPDK-based datapath that targets low overhead and low jitter. That matters for isolation because it keeps CPU per I/O tight, which reduces variability under load.

For Kubernetes Storage, simplyblock supports flexible deployment patterns, including hyper-converged, disaggregated, and hybrid layouts, so teams can place performance-critical volumes close to compute or centralize pools for easier operations. With NVMe/TCP (and NVMe/RoCEv2 where needed), the platform can enforce multi-tenancy and QoS policies so one tenant cannot consume the queue, the bandwidth, or the CPU budget that another tenant depends on. That combination helps platform teams protect tail latency while still running high utilization across shared NVMe capacity.

Future Directions and Advancements in Storage Performance Isolation

Isolation will keep moving closer to the hardware boundary. DPUs and IPUs can offload parts of the storage datapath, reduce host CPU variance, and enforce policy closer to the wire. Expect tighter integration between telemetry (eBPF-style observability), policy engines, and storage schedulers that adapt in near real time to workload phase changes.

On the standards side, continued NVMe-oF transport maturity and wider adoption of per-tenant control models will push more deterministic behavior over Ethernet fabrics. For Kubernetes, teams will likely standardize on intent-driven StorageClasses that map directly to SLO targets, with automated verification that proves isolation under noisy-neighbor load, before production rollout.

Related Terms

Teams often review these glossary pages alongside Storage Performance Isolation.

Storage Quality of Service (QoS)

Storage Resource Quotas in Kubernetes

SLO (Service Level Objective)

SLA (Service Level Agreement)

Questions and Answers

Performance isolation is proven by fairness under mixed load, not peak throughput. Run at least two tenants with conflicting patterns and compare per-tenant p95/p99 latency, IOPS, and bandwidth share as load rises. If one tenant’s burst pushes another tenant’s p99 up without changing its own workload, isolation is weak. This matches performance isolation in multi-tenant storage.

Strong isolation usually combines per-volume limits, fair scheduling across queues, and shaping of background work like rebuild or compaction. It fails when limits are only “best effort,” when queueing happens above the control point, or when CPU/IRQ pressure makes completions uneven. The result is that one workload can monopolize the hot path even if raw bandwidth looks available.

Noisy neighbors happen when multiple tenants share the same pool and one workload dominates queues, caches, or CPU cycles, forcing others to wait. The pain shows up first as tail-latency spikes and retry storms, especially for databases with sync writes. To reason about this, model it explicitly as I/O contention and correlate spikes with queue depth and CPU saturation.

Set a p99 latency target per tier, then cap each tenant’s concurrency so the system stays below the queueing knee during normal load. When a tenant exceeds its budget, throttle that tenant first instead of letting the whole cluster drift. This avoids the common trap where average latency looks fine while user-facing SLOs break. Track p99 storage latency as the main guardrail.

Isolation must hold during degraded mode, when rebuild traffic competes with production I/O. Induce a controlled failure, keep foreground load constant, and measure whether per-tenant p99 remains bounded while the system restores protection. If rebuild work steals bandwidth unevenly, tenants with small but sync-heavy workloads will degrade first. A good platform shapes background work so recovery doesn’t redefine “normal.”