AccessModes in Kubernetes Storage

Terms related to simplyblock

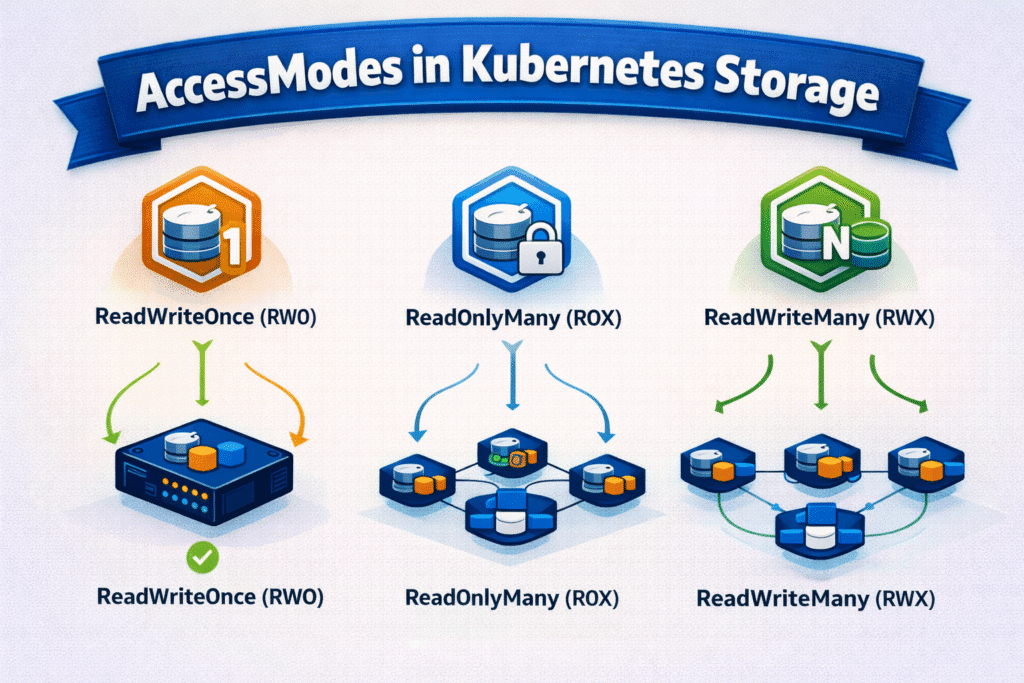

AccessModes in Kubernetes Storage define how pods can mount a PersistentVolume through a PersistentVolumeClaim. They do not set performance. They set the allowed read and write pattern, which shapes safe scaling, failover, and recovery behavior for stateful apps.

Kubernetes supports four common access modes. ReadWriteOnce (RWO) allows read and write access from a single node at a time. ReadOnlyMany (ROX) allows many nodes to mount the volume as read-only. ReadWriteMany (RWX) allows many nodes to mount the same volume with read and write access. ReadWriteOncePod (RWOP) limits the volume to one pod, which helps protect strict single-writer designs.

Platform Tuning for Reliable Volume Access

Access modes work best when platform teams treat them as a contract. App teams pick the smallest mode that matches the workload. Platform teams back that choice with a consistent StorageClass policy, clear topology rules, and stable volume lifecycle behavior.

Most production databases and queues run well with RWO because the app already handles leader election and replication. RWX fits shared write use cases, but it often shifts the design toward a shared filesystem layer and adds its own failure risks. RWOP works well when you want a hard “one pod writes” guarantee, even during reschedules.

🚀 Set the Right AccessModes for Stateful Apps on NVMe/TCP Storage, Natively in Kubernetes

Use simplyblock to keep mounts predictable, avoid multi-attach issues, and scale with clear RWO/RWX policies.

👉 Use Simplyblock for Persistent Storage on Kubernetes →

How AccessModes in Kubernetes Storage Affect Scheduling and Volume Lifecycle

Access modes influence binding and placement. A claim requests an access mode, and Kubernetes matches it to a compatible volume. On each node, the kubelet and the CSI node plugin perform attach and mount actions that must follow the requested mode.

RWO and RWOP reduce the risk of split-brain writes, but they can increase recovery steps during node failure. The cluster must move the writer cleanly before the app resumes writes. RWX can improve flexibility for some shared data patterns, yet it can create data safety issues if the backend does not enforce correct semantics under failure.

NVMe/TCP as a Low-Jitter Data Path for Stateful Apps

Access modes set the rule, and the data path determines how cleanly the platform can follow that rule under load. NVMe/TCP often helps reduce latency variance on Ethernet networks, which can improve mount reliability and reduce rollout drag for stateful workloads.

When you pair NVMe/TCP with an SPDK-style user-space path, you can also cut CPU overhead per I/O and reduce jitter during busy periods. That matters when nodes run both application compute and storage-related work. These gains show up as steadier pod readiness times and fewer noisy storage events during scaling.

Measuring Access Mode Impact on Startup and Recovery

Access modes do not change raw throughput by themselves, but they shape time-to-ready and time-to-recover. Track the time from pod scheduling to containers ready, and correlate it with volume attach and mount events. Watch for multi-attach conflicts, detach delays, and repeated mount retries, especially during node drains and rolling updates.

For workload-level data path checks, run a benchmark that matches your I/O pattern, then compare p95 and p99 latency during normal load and during disruptive events. If tail latency spikes during reschedules, review topology choices, node CPU pressure, and storage QoS behavior.

Approaches for Improving AccessModes in Kubernetes Storage Performance

Pick the smallest access mode that matches how the app writes. Standardize a few StorageClasses that map to clear access patterns and service tiers. Align placement rules with the storage topology so the cluster does not waste time on failed attaches and late remounts.

If an app needs shared writes, confirm that the backend supports RWX semantics under failure and recovery, not just during a happy path. If the app follows a leader-based model, prefer RWO or RWOP, and keep the writer transition clean. Protect critical workloads with storage QoS so bulk jobs do not delay mounts, rebuild work, or steady p99 I/O.

AccessModes Tradeoffs at a Glance

This table summarizes practical differences between access modes and what they imply for operations and backend choice.

| AccessMode | What it allows | Typical fit | Common risk if misused |

|---|---|---|---|

| ReadWriteOnce (RWO) | Read/write on one node at a time | Most StatefulSets, databases, queues | Multi-attach errors during failover |

| ReadWriteOncePod (RWOP) | Read/write by one pod only | Strict single-writer apps | Blocks patterns that expect shared mounts |

| ReadOnlyMany (ROX) | Read-only on many nodes | Reference data, shared configs | App fails when it needs writes |

| ReadWriteMany (RWX) | Read/write on many nodes | Shared workspaces, build caches | Most StatefulSets, databases, and queues |

Operational Consistency for AccessModes with Simplyblock™

Simplyblock™ targets a common Kubernetes reality: many production stateful services run best with RWO or RWOP, and they need stable latency through change events. Simplyblock delivers Software-defined Block Storage built around NVMe/TCP and an SPDK-based, user-space data path, which helps reduce CPU overhead and keep tail latency under control.

That design supports hyper-converged, disaggregated, and mixed deployments. It also supports multi-tenancy and QoS, so one tenant’s burst does not erode another tenant’s mount behavior or p99 latency. For teams building a SAN alternative with Kubernetes-first operations, that combination can tighten rollout time and reduce storage-driven incident churn.

What’s Next for AccessModes and CSI Behavior

Kubernetes keeps tightening access guarantees, and RWOP plays a bigger role in strict single-writer setups. CSI drivers also keep improving topology hints and health signals, which help clusters avoid multi-attach conflict loops and shorten failover time.

On the infrastructure side, DPUs and IPUs will offload more storage and network work, and NVMe/TCP will remain a practical default transport on Ethernet.

Related Terms

Teams often review these glossary pages alongside AccessModes in Kubernetes Storage when they standardize Kubernetes Storage policies and Software-defined Block Storage tiers:

- StorageClass

- Dynamic Provisioning in Kubernetes

- Storage Quality of Service (QoS)

- Persistent Volume Claim (PVC)

- Kubernetes ReadWriteOncePod

Questions and Answers

AccessModes (ReadWriteOnce, ReadOnlyMany, ReadWriteMany) dictate how a PersistentVolume can be mounted across pods and nodes. The Kubernetes control plane uses this during pod scheduling, while enforcement is handled by the CSI driver and backend capabilities.

ReadWriteOnce (RWO) allows a single node to mount the volume with read/write permissions. ReadWriteMany (RWX) supports multiple concurrent mounts across nodes. Not all CSI drivers offer RWX; for scalable access patterns, use backends supporting shared persistent storage.

For latency-sensitive workloads like databases or analytics engines, RWO paired with NVMe-over-TCP block storage delivers optimal performance. RWO ensures exclusive volume access, avoiding write contention and maintaining strong data consistency at the filesystem level.

Yes. A PersistentVolume can declare multiple AccessModes (e.g., ReadWriteOnce, ReadOnlyMany), but Kubernetes will only use the one requested by the PVC. Ensure that StorageClass parameters and the CSI driver align with all declared modes to avoid mount errors.

Using strict AccessModes like ReadWriteOnce or ReadOnlyMany limits cross-pod data exposure, enhancing multi-tenant isolation. In secure environments, this is combined with per-volume encryption and namespace-based RBAC for fine-grained access control.